CX Trends 2026: AI Transparency in Customer Experience Explained

Introduction

AI is making more decisions for customers than ever. By 2026, people want clear explanations for those choices.

Key Takeaways

- Being transparent about AI in CX is now a business requirement, not just an ethical concern.

- Regulated industries take on bigger risks if they don’t explain how AI makes decisions.

- When AI decisions are clearly explained, customer support teams experience fewer escalations and complaints.

- Clear explanations of AI decisions help build lasting trust with customers.

- Strong AI governance is now an essential part of leading customer experience teams.

- Customers are more willing to accept a “no” when they understand the reason.

Why AI Transparency in Customer Experience Matters in 2026

AI is now involved in:

- Refund approvals

- Fraud flags

- Loan or eligibility checks

- Account suspensions

- Complaint prioritisation

Five years ago, most customers didn’t question automated decisions. Now, they want more information.

When a decision affects someone’s money, access, or reputation, customers expect clear explanations. That’s why AI transparency in customer experience is now a top concern for big companies and regulated industries.

A simple “the system declined your request” is no longer acceptable.

Customers expect:

- A reason

- A policy reference

- A path to review

- A human option

Without these explanations, trust in AI-powered customer service begins to erode.

Where AI Decisions Create the Most Friction

Not every automated action needs a detailed explanation. Issues come up when AI changes the outcome, not just the speed.

The biggest risk areas include:

1. Financial and Eligibility Decisions

Credit approvals, insurance underwriting, subscription access, or refunds.

2. Fraud and Risk Scoring

Blocked transactions and account freezes.

3. Prioritisation

VIP routing or complaint escalation logic.

4. Policy Enforcement

Late fees, service limits, and compensation rules.

In regulated fields such as financial services, healthcare, utilities, and insurance, these decisions are often reviewed for compliance.

If you’re unsure what regulators expect, the OECD AI Principles outline global standards around transparency and accountability.

When customers don’t understand why something happened, they may see it as unfair. This can lead to more complaints, escalations, and harm to your reputation.

What Good AI Decision Explanation Looks Like

Explaining AI decisions to customers does not mean you have to reveal your algorithms. It means giving clear and simple reasons for decisions.

Here’s the difference:

Instead of:

“Your claim was rejected.”

Say:

“Your claim was declined because it was submitted outside the 30-day policy window. You can request a manual review within 7 days.”

A good AI decision explanation includes:

- Clear reasoning

- Reference to policy

- Actionable next steps

- Option for human review

Your explanations should always be consistent and calm, not defensive.

Transparency isn’t about giving more information. It’s about making sure the right things are explained clearly.

Why Transparency Builds AI Trust in Customer Service

Customers know they won’t always get the outcome they want.

But they do expect fairness.

Research across behavioural economics shows people are more likely to accept negative outcomes when the process feels fair.

That’s why trust in AI-powered customer service relies so much on clear explanations.

A clear explanation for a “no” builds more confidence than a “yes” with no explanation.

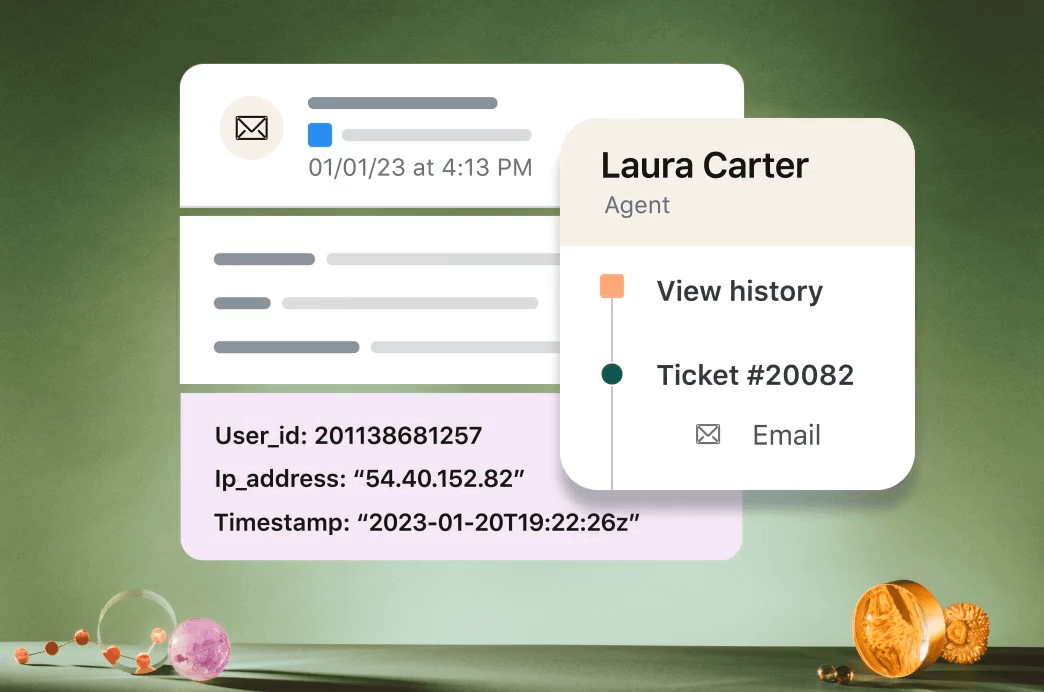

In enterprise environments, transparency also protects you internally:

- Legal teams want documented reasoning.

- Risk teams want audit trails.

- Executives want defensible decisions.

AI transparency in customer experience helps both customers and the organisation.

How CX Leaders Should Approach AI Governance

AI governance isn’t just an IT job anymore. CX leaders are responsible too.

Here is a practical starting framework:

1. Map AI Decision Points

Write down where AI directly affects customer outcomes.

2. Define Explanation Standards

Make templates for approval, rejection, and escalation messages.

3. Align With Compliance Early

Work with your legal team before regulators start asking questions.

For reference, the EU AI Act overview shows how global regulation is evolving.

4. Train Support Teams

Agents need to understand AI logic well enough to explain it clearly.

5. Keep a Human Escalation Path

Customers should always know how to ask for a review of an AI decision.

AI transparency in customer experience isn’t about slowing down automation. It’s about taking responsibility for the decisions your systems make.

AI Transparency Is the Next CX Differentiator

In 2024 and 2025, companies focused on increasing automation.

By 2026, the focus is shifting to accountability.

Enterprise teams that invest in explainable AI customer support will see:

- Fewer escalations

- Lower complaint volumes

- Stronger customer trust

- Reduced regulatory exposure

The real risk isn’t in using AI itself.

The risk comes from using AI that you can’t explain.

Where this fits in CX Trends for 2026

We have brought these ideas together from the CX Trends 2026 report, which includes practical examples and advice for CX leaders.

👉 Download the CX Trends 2026 PDF

As a Zendesk Premier Partner, Gravity CX works with teams to apply these changes in real support environments.

Frequently Asked Questions

What is AI transparency in customer experience?

AI transparency in customer experience means clearly explaining how automated decisions impact customers, especially in approvals, fraud checks, and policy enforcement.

Why is explainable AI customer support important?

When AI decisions are clearly explained, customer support teams receive fewer complaints, build greater trust, and help protect organisations in regulated industries.

How does trust in AI-powered customer service improve with transparency?

Trust in AI-powered customer service grows when customers know why a decision was made and have a clear way to appeal or ask for a review.

What is an AI decision explanation?

An AI decision explanation is a simple, clear reason given to a customer that explains why an automated system made a certain decision.

Do regulated industries need higher AI transparency?

Yes. Financial services, healthcare, insurance, and utilities face higher compliance requirements and greater reputational risk when AI decisions lack explanation.